Documentation Index

Fetch the complete documentation index at: https://docs.goakt.dev/llms.txt

Use this file to discover all available pages before exploring further.

Bird’s eye view

GoAkt is a framework for building concurrent, distributed, and fault-tolerant systems in Go using the actor model.

Every unit of computation is an actor—a lightweight, isolated entity that communicates exclusively through messages.

The Actor System is the top-level runtime that hosts actors and orchestrates messaging, clustering, and lifecycle.

Three deployment modes

| Mode | Description |

|---|

| Standalone | Single process. Actors communicate in-process. |

| Clustered | Multiple nodes. Discovery via Consul, etcd, Kubernetes, NATS, mDNS, or static. Location-transparent actors. |

| Multi-Datacenter | Multiple clusters across DCs. Pluggable control plane (NATS JetStream or etcd). DC-aware placement. |

Core building blocks

| Concept | Role |

|---|

| Reactive stream | Composable stream.Source → Flow stages → Sink pipelines, materialized with RunnableGraph.Run on an ActorSystem. Stages run as actors with demand-driven backpressure. See Streams. |

| CRDT / Replicator | Conflict-free replicated data types (crdt package), merged by a per-node Replicator system actor. Replication is eventually consistent—separate from the Olric actor/grain registry (quorum-strong). See Distributed data. |

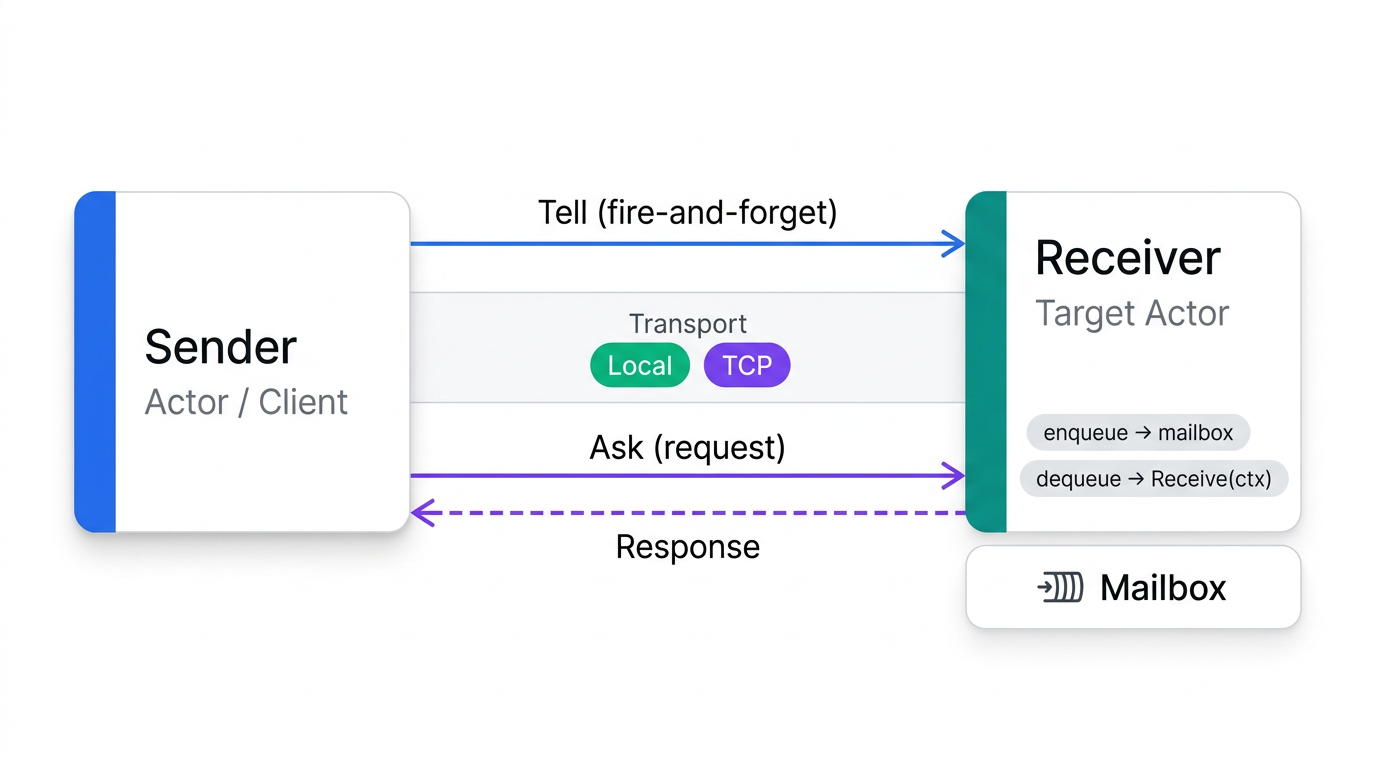

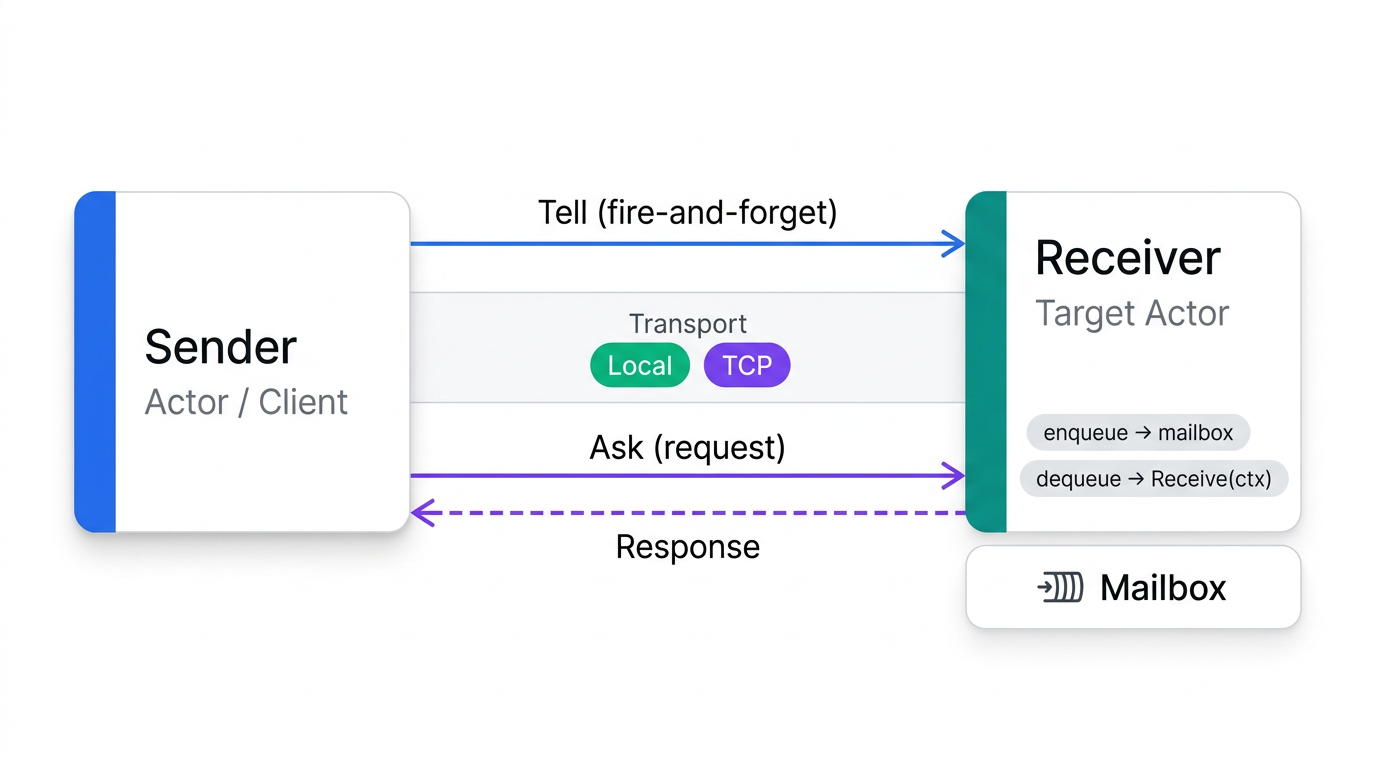

Message flow

For remote messages, the remoting layer serializes the payload over a custom TCP frame protocol with optional

compression.

For remote messages, the remoting layer serializes the payload over a custom TCP frame protocol with optional

compression.

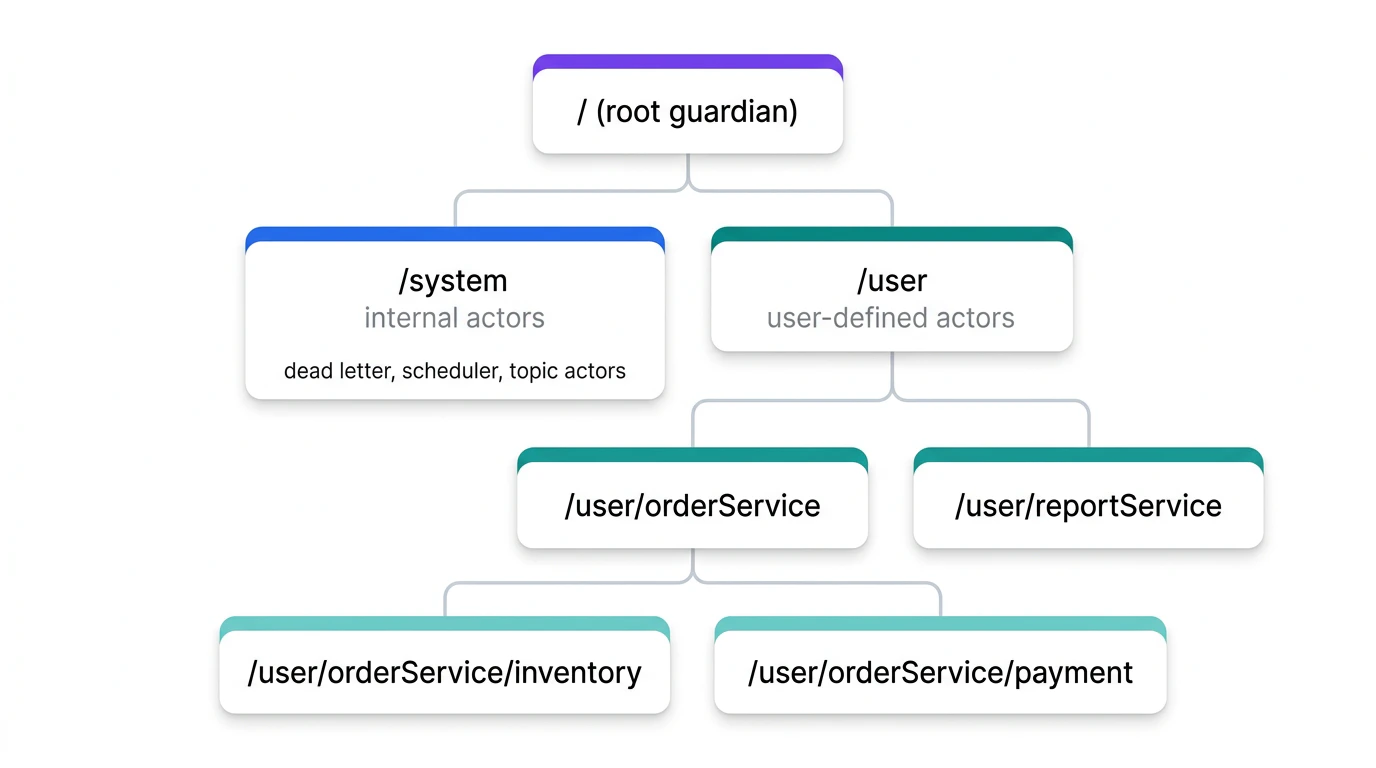

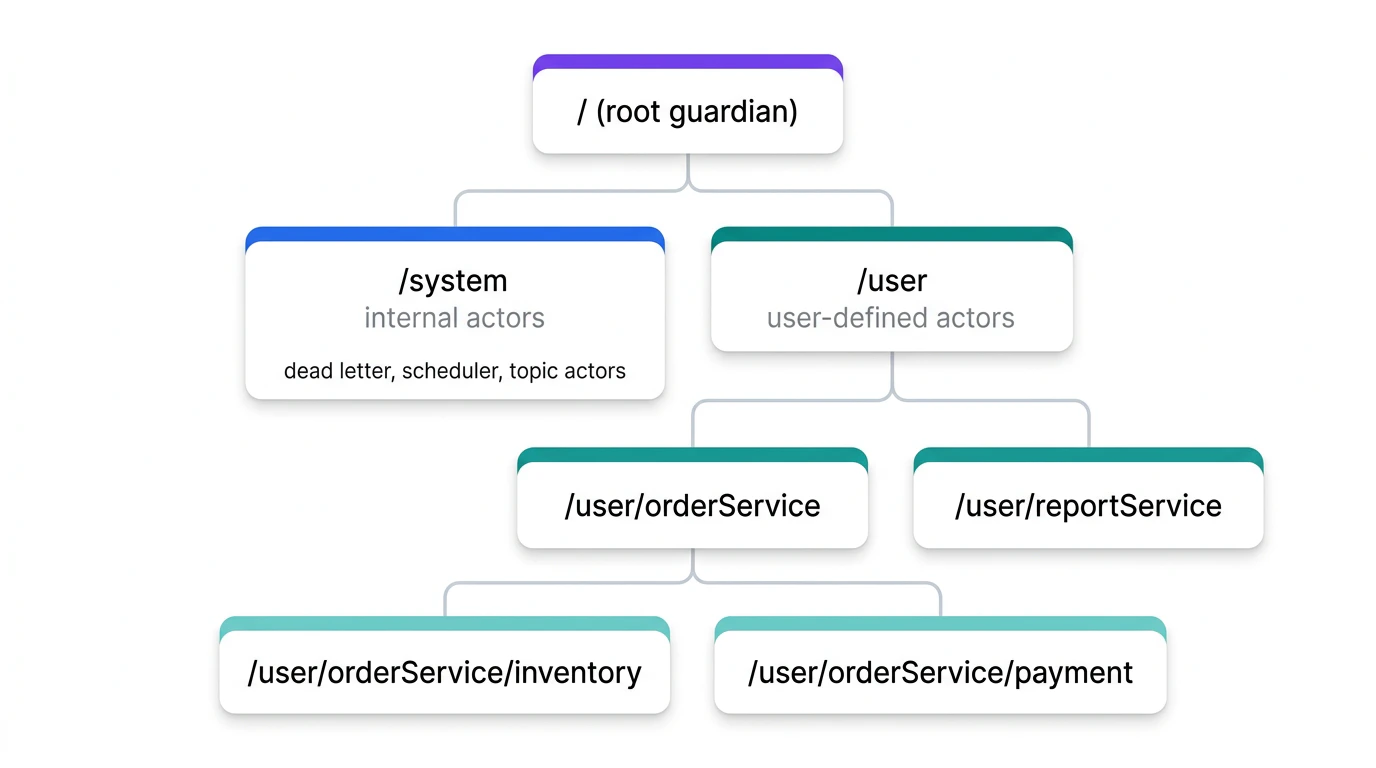

Actor hierarchy

When a parent stops, all children stop first (depth-first). A parent supervises its children.

When a parent stops, all children stop first (depth-first). A parent supervises its children.

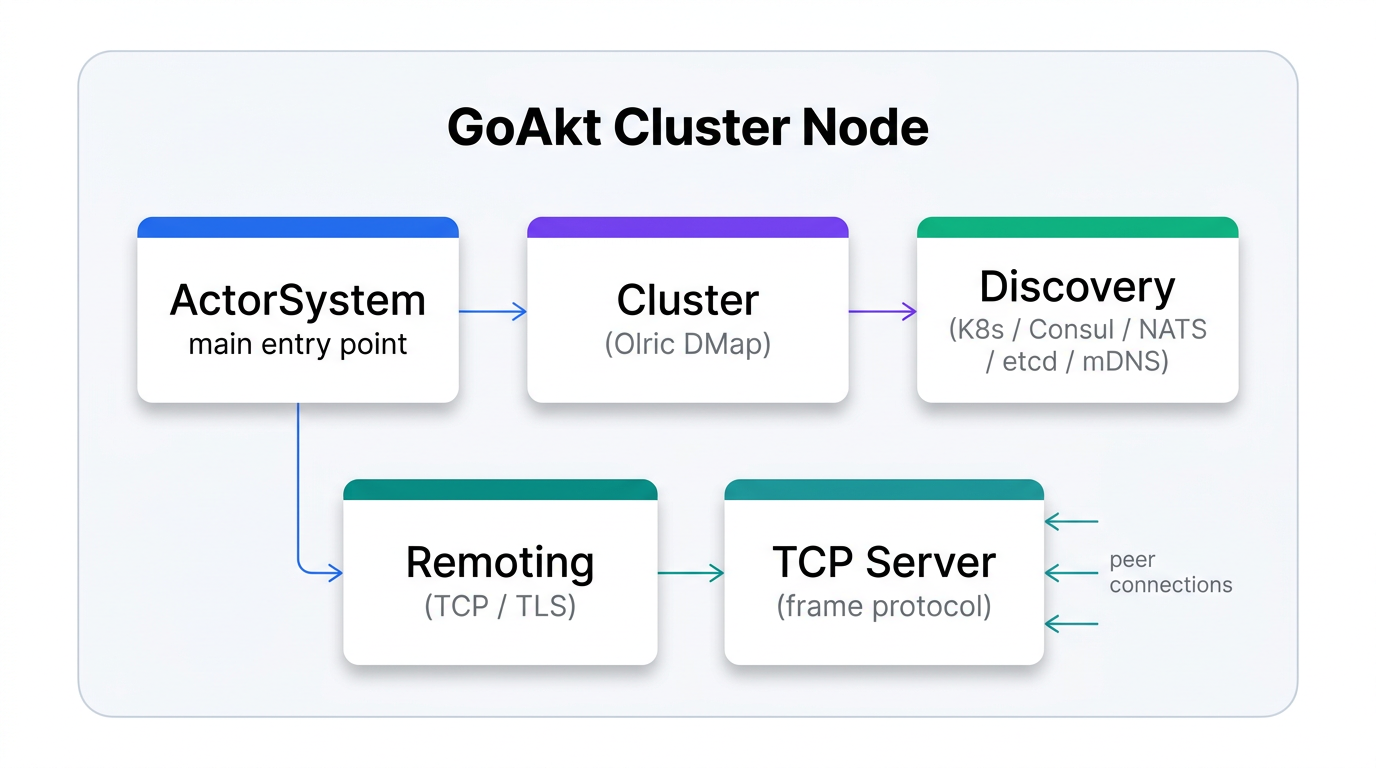

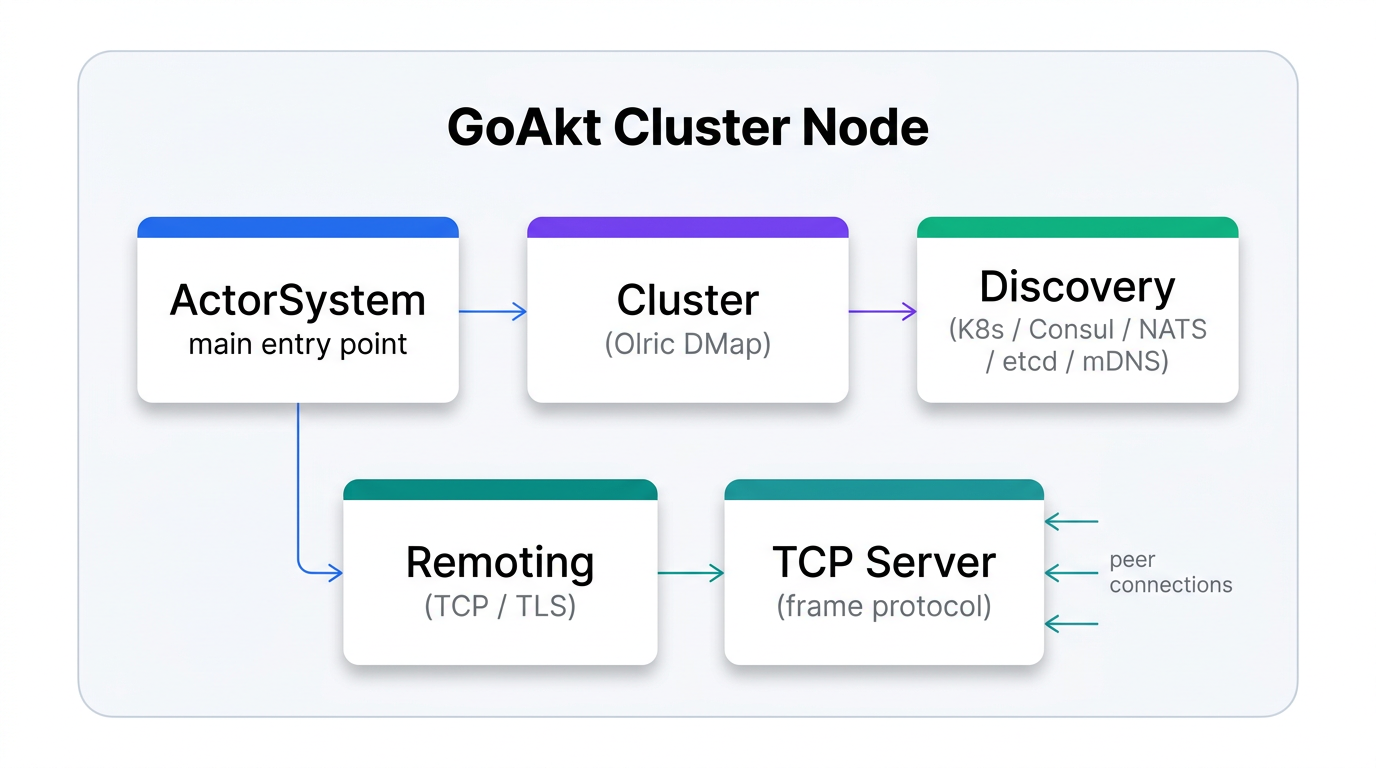

Cluster architecture

Placement and membership state (which actors and grains live where) is stored in Olric (distributed hash map) with configurable quorum. Node membership uses Hashicorp Memberlist. CRDT application state (when enabled via

Placement and membership state (which actors and grains live where) is stored in Olric (distributed hash map) with configurable quorum. Node membership uses Hashicorp Memberlist. CRDT application state (when enabled via ClusterConfig.WithCRDT) is replicated separately by the Replicator actor using delta broadcast over the topic bus—not through Olric DMap writes.

Deep dives

The following pages describe the internal architecture of each major subsystem:

| Subsystem | Page | What it covers |

|---|

| Scheduler | Dispatcher Pool | Fixed worker pool, actor state machine, ready queue, work stealing, system-message priority, throughput tuning. |

See also